Warp-Speed Wednesdays

Your Must-Read Tech Updates: Starship Flight 4 Tomorrow, AI Factories, AI Avatars Coming in Hot, and Need More Robot Data

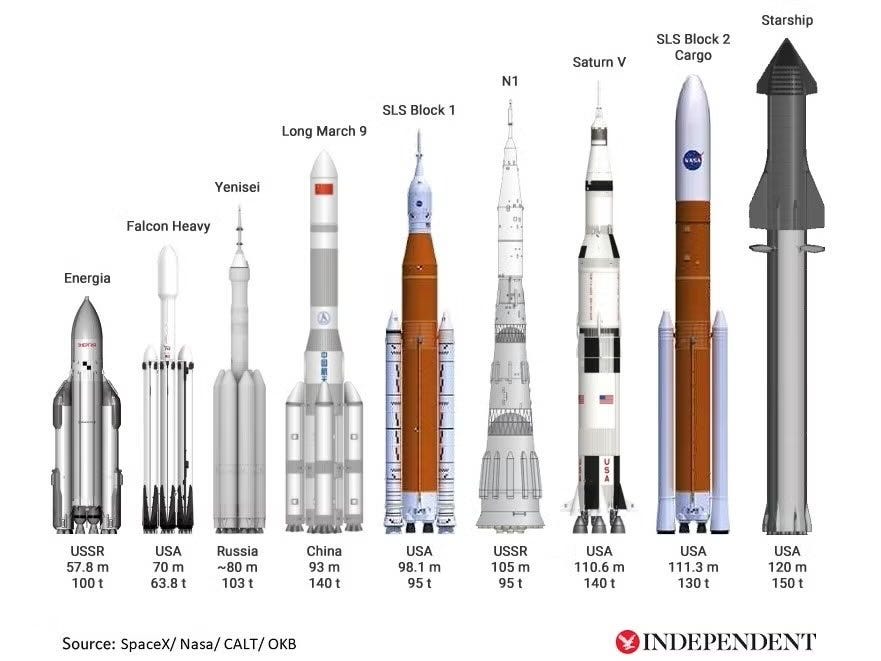

Starship: Flight 4 is Scheduled for Tomorrow at 10 am

Warp Highlights:

In some Warp-Speed updates, I will have this brief section on popular industry news to highlight key events I think a broader audience should be aware of.

OpenAI has released its GPT-4o model to everyone.

Pros:

Pricing: GPT-4o is 50% cheaper than GPT-4 Turbo, coming in at $5/M input and $15/M output tokens).

Rate limits: GPT-4o’s rate limits are 5x higher than GPT-4 Turbo—up to 10 million tokens per minute.

Speed: GPT-4o is 2x as fast as GPT-4 Turbo.

Vision: GPT-4o’s vision capabilities perform better than GPT-4 Turbo in evals related to vision capabilities.

Multilingual: GPT-4o has improved support for non-English languages over GPT-4 Turbo.

GPT-4o currently has a context window of 128k and has a knowledge cut-off date of October 2023.

Cons: In my opinion, it has worse reasoning than GPT-4. I recommend GPT-4 for more complex problems like engineering, coding, math, etc.

OpenAI disbands its Superalignment team and some of its ex-employees are saying why. I leave it up to you to decide your opinion on the matter.

SanctuaryAI patented an idea for artificial skin for their robots.

Elon ordered so many GPUs for AI training that he doesn’t have a place to house them. Tim Zaman, ex-Tesla Infra Lead Engineer, says they need an army of workers to get these operational.

AI: The Industry of Generating Intelligence

NVIDIA’s AI Factories: The New Widget is Generated Intelligence

Why is this important: Jensen Huang's latest keynote focuses on the transformative potential of AI factories to reliably manufacture intelligence in the digital and physical world.

Jensen Huang, the CEO of Nvidia, introduced the concept of AI factories—multi-billion dollar data centers, each guzzling energy like a city or state, requiring their personal power plants.

These AI factories will produce AI-generated outputs called tokens. These tokens can represent any form of data—words, images, steering wheel control for cars, robotic movements, proteins, weather patterns, etc.

But, why does this matter?

Huang emphasizes our transition into the generative AI era, where computers create knowledge from their training data, rather than simply searching and relaying it. He exclaims that the methodology for creating AI factories is highly scalable and repeatable, enabling the rapid development and deployment of new generative AI models across industries.

The economic implications of AI factories are staggering. While the IT industry is valued at around $3 trillion, AI factories have the potential to directly serve and transform industries worth over $100 trillion. By efficiently generating valuable outputs, AI factories can revolutionize manufacturing, services, and other sectors, driving innovation and creating new opportunities.

I highly recommend that you watch the entire Keynote by clicking the yellow title of this section.

Microsoft CTO Kevin Scott: Emerging AI Models Demonstrate Enhanced Reasoning Capabilities

Why is this important: The development of AI models with improved reasoning abilities could lead to more intelligent and context-aware AI systems which will could open the door to reliable AI agents that work for you.

In a recent statement, Microsoft CTO Kevin Scott highlighted the promising advancements in next-generation AI models, particularly in their ability to demonstrate better reasoning capabilities. This development marks a significant milestone in the field of artificial intelligence, as improved reasoning skills could enable AI systems to tackle more complex problems and make more informed decisions.

Lifelike Digitial Avatars Coming to Screen Near You: Microsoft’s VASA-1

Why is this important: VASA introduces a groundbreaking framework for generating realistic talking faces with natural facial expressions and head movements from a single image and speech audio, getting us closer to the Her sci-fi moments.

Samantha : “You know, I actually used to be so worried about not having a body, but now I truly love it. I'm growing in a way that I couldn't if I had a physical form. I mean, I'm not limited - I can be anywhere and everywhere simultaneously. I'm not tethered to time and space in the way that I would be if I was stuck inside a body that's inevitably going to die.”

VASA (Visual Affective Skills Animation) is a framework that brings static images to life by generating lifelike talking faces with appealing visual affective skills (VAS). Given a single static image and a speech audio clip, VASA-1, the premiere model of the framework, can produce lip movements that are perfectly synchronized with the audio while also capturing a wide range of facial nuances and natural head motions. These elements contribute to the perception of authenticity and liveliness, making the generated talking faces more engaging and realistic.

At the core of VASA's innovations is a diffusion-based holistic facial dynamics and head movement generation model that operates in a face latent space. This latent space is developed using videos, enabling the model to capture and generate expressive and disentangled facial features.

Through extensive experiments and evaluation using a set of new metrics, VASA has demonstrated significant improvements over previous methods across various dimensions, delivering high-quality videos with realistic facial and head dynamics. One of the key advantages of VASA is its ability to support online generation of 512×512 videos at up to 40 frames per second (FPS) with negligible starting latency.

Robotics: We Want Data NOW!

Carnegie Mellon Researchers Introduce SPIN: Selective Perception and Integrated Navigation for Mobile Manipulation

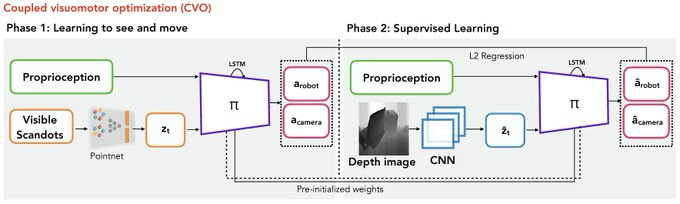

Why is this important: SPIN's end-to-end neural network approach which researchers are focused on to advance reliable and adaptable robots. Combining selective perception with an actuated camera enables mobile manipulators to decide what to perceive and optimize joint action-perception for enhanced hand-eye coordination, all within a single policy.

By integrating perception and action, SPIN eliminates the need for separate modules, reduces compounding errors, and enables real-time decision-making. The end-to-end nature of SPIN's neural network simplifies the training process and enables the robotic system to be more efficient, adaptable, and intelligent robotic systems in various applications, such as household assistance, industrial automation, and search and rescue operations.

RoboCase: A Safe Space to Train Robots using Sim

Why is this important: The robotics industry is starving for data to train neural networks, and researchers and industry alike are funneling effort into gathering data quicker than a chancleta thrown by your mother after you forgot to take out the trash.

RoboCasa is a simulation framework that harnesses the power of generative AI tools to train robots for everyday tasks, with a focus on kitchen scenes. By leveraging large language models (LLMs) and text-to-image/3D generative models, RoboCasa creates realistic and diverse human-centered environments, offering over 2,500 3D assets across 150+ object categories and numerous interactable furniture and appliances. The framework includes a suite of 100 tasks, representing a wide range of everyday activities, providing a solid foundation for training robots to adapt to various real-world situations.

By combining realistic environments, diverse tasks, and extensive training data, RoboCasa enables researchers and developers to train robots that are more versatile, adaptable, and capable of handling the complexities of real-world scenarios.